SELECT * FROM azure_storage.blob_list('mystorageaccount','privdatasets') ĮRROR: azure_storage: missing account access key Without an access key, we won't be allowed to list containers that are set to Private or Blob access levels. Options := azure_storage.options_csv_get(force_not_null := ARRAY)) INSERT INTO github_usersįROM azure_storage.blob_get('pgquickstart', 'github', '', NULL::github_users, Knowing the above, we can discard recordings with null gravatar_id during parsing.

Options_copy - delimiter, null_string, header, quote, escape, force_quote, force_not_null, force_null, content_encoding. Options_tsv - delimiter, null_string, content_encoding Options_csv_get - delimiter, null_string, header, quote, escape, force_not_null, force_null, content_encoding decoderīy looking at the function definitions, you can see which parameters are supported by which decoder. There are four utility functions that help building values for it.Įach utility function is designated for the decoder matching its name. In some situations, you may need to control exactly what blob_get attempts to do by using the decoder, compression and options parameters.ĭecoder can be set to auto (default) or any of the following values: formatĬompression can be either auto (default), none or gzip.įinally, the options parameter is of type jsonb. In the above command, we filtered the data to accounts with a gravatar_id present and upper cased their logins on the fly. SELECT user_id, url, UPPER(login), avatar_url, gravatar_id, display_loginįROM azure_storage.blob_get('pgquickstart', 'github', '', NULL::github_users) With this function, you can manipulate data on the fly in complex queries, and do imports as INSERT FROM SELECT. In the above query, the file is fully fetched before LIMIT 3 is applied. Internally COPY uses the blob_get function, which you can use directly to manipulate data in more complex scenarios.

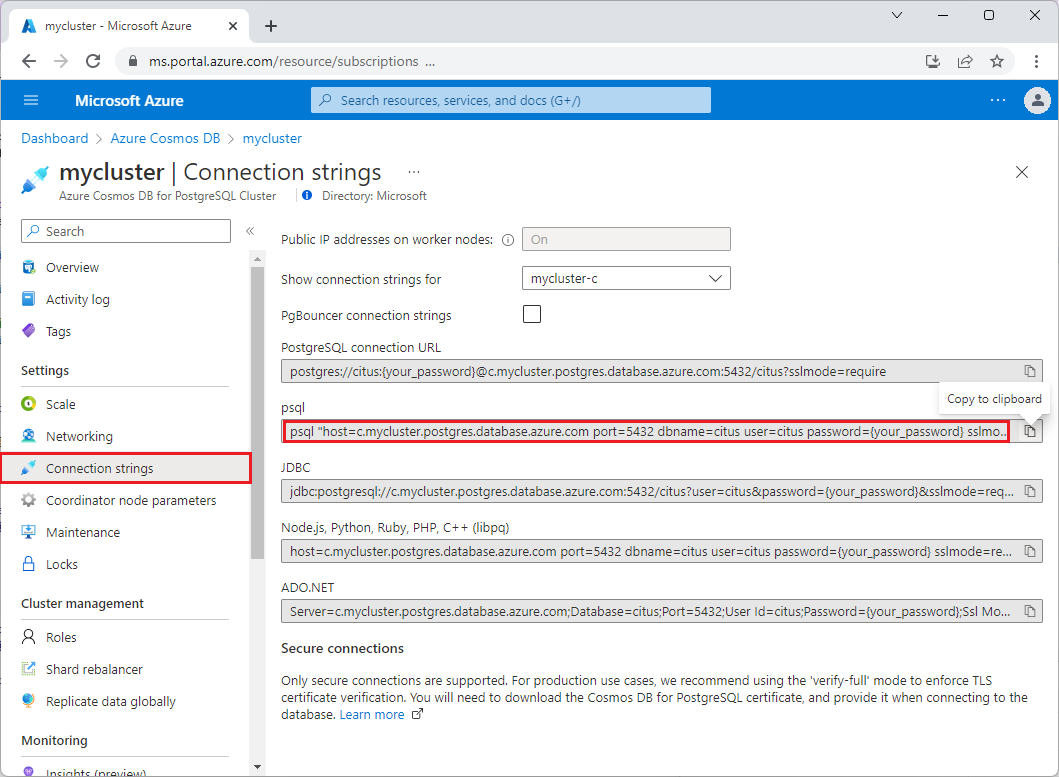

The COPY command is convenient, but limited in flexibility. Tab-separated values, the default PostgreSQL COPY formatĪ file containing a single text value (for example, large JSON or XML) COPY github_usersĬurrently the extension supports the following file formats: formatĬomma-separated values format used by PostgreSQL COPY You can however provide the format directly similar to the regular COPY command. In the above example, the format and compression were auto-selected based on the file extensions. The COPY command supports more parameters and formats. Notice how the extension recognized that the URLs provided to the copy command are from Azure Blob Storage, the files we pointed were gzip compressed and that was also automatically handled for us. Loading data into the tables becomes as simple as calling the COPY command. SELECT create_distributed_table('github_events', 'user_id') SELECT create_distributed_table('github_users', 'user_id') CREATE TABLE github_usersĬREATE INDEX event_type_index ON github_events (event_type) ĬREATE INDEX payload_index ON github_events USING GIN (payload jsonb_path_ops) Load data from ABS Load data with the COPY command SELECT * FROM azure_storage.blob_list('pgquickstart','github','e') Listing container contents requires an account and access key or a container with enabled anonymous access. The latter filters the returned rows on the Azure Blob Storage side. You can filter the output either by using a regular SQL WHERE clause, or by using the prefix parameter of the blob_list UDF. SELECT path, bytes, pg_size_pretty(bytes), content_typeįROM azure_storage.blob_list('pgquickstart','github') We can easily see which files are present in the container by using the azure_storage.blob_list(account, container) function. The container's name is github, and it's in the pgquickstart account. There's a demonstration Azure Blob Storage account and container pre-created for this how-to. Containers set to access level "Private (no anonymous access)" or "Blob (anonymous read access for blobs only)" will require an access key. Selecting "Container (anonymous read access for containers and blobs)" will allow you to ingest files from Azure Blob Storage using their public URLs and enumerating the container contents without the need to configure an account key in pg_azure_storage.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed